Whose Reflection Is It? Agency, Meaning, and the AI Systems Mediating Our Inner Lives

The DigiNEST program blog opens with a question: what happens to human agency when machines mediate our narratives?

We live inside stories more than we tend to notice. Not just novels or films, but the quieter, infrastructural narratives that shape how we interpret information, understand ourselves, and decide what to do next. Dashboards tell us whether we are “on track.” Feeds tell us what matters. Recommendation systems quietly suggest what kind of person we might be becoming.

Increasingly, these narratives are not authored solely by humans.

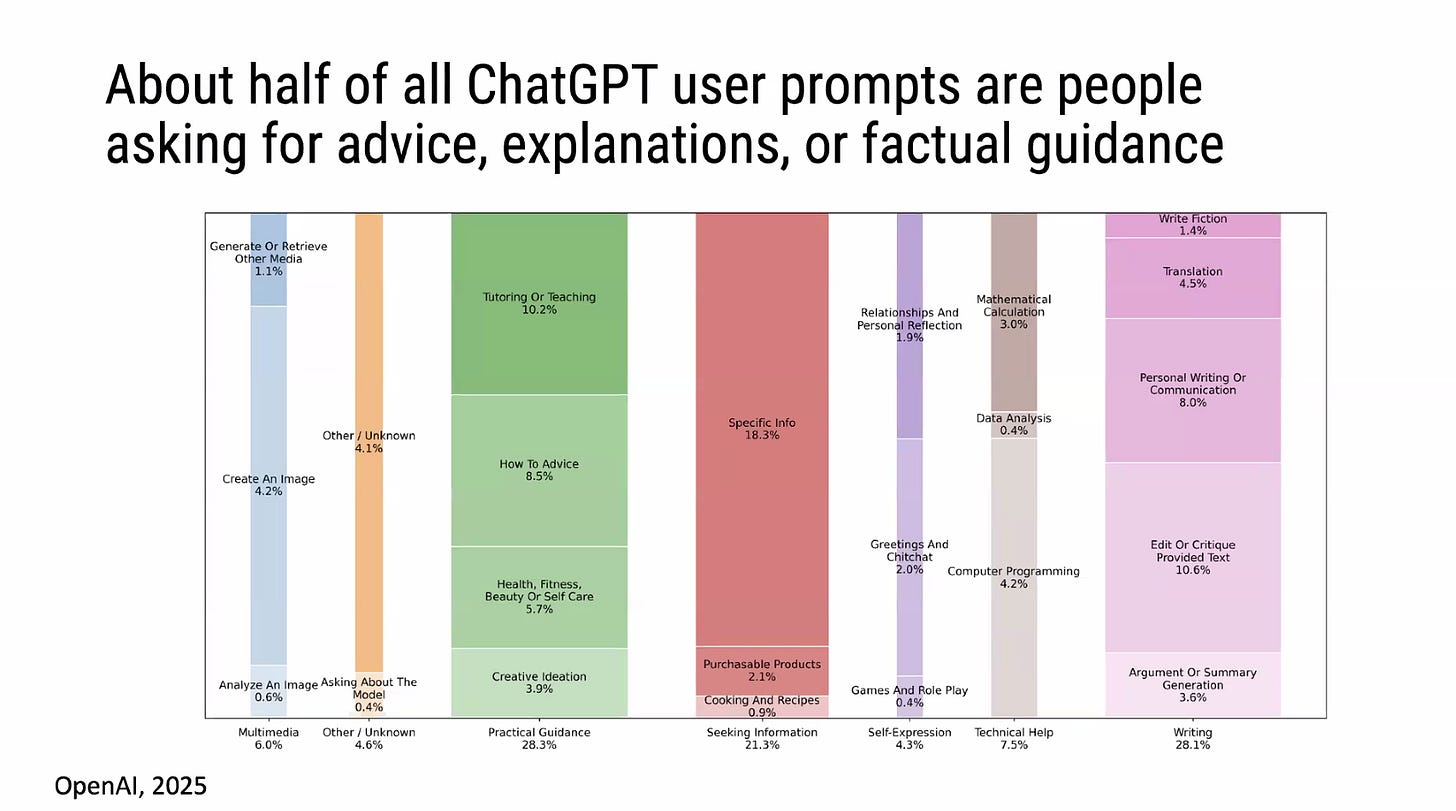

Across education, mental health, productivity tools, and media platforms, AI systems are being positioned as aids to reflection, sense-making, and decision-making. They summarize, reframe, prompt, nudge, and sometimes advise. In doing so, they don’t just process information — they participate in the stories through which people understand themselves and the world.

This blog, hosted by DigiNEST (Digital Narratives and Emerging Story Technologies) under JOPRO’s Society Ethics Technology and Mental Health Paradigms & Practices working groups explores a deceptively simple question:

What happens to human agency when machines begin to mediate our narratives?

To begin answering that, we need better language than the usual binaries of “AI replaces humans” versus “AI is just a tool.” A useful starting point comes from a recent research paper that introduces a concept the authors call reflective agency, and a framework for designing around it.

From Automation to Reflection

Much of the public conversation around AI and agency focuses on outward action: who is making decisions, who is accountable, who controls the system.

But many of the most intimate AI systems don’t act for us. They act on our reflection.

Think of journaling assistants, therapy chatbots, career-planning tools, or systems that summarize your habits and patterns back to you. These systems don’t directly decide what you should do. Instead, they shape how you interpret your own thoughts, experiences, and options. They sit between the raw material of experience and the meanings we make from it.

This is the domain explored in “Reflective Agency: Ethical and Empirical Framework for AI-Mediated Self-Reflection Systems”, a paper presented at AAAI/ACM AIES 2025 by Minsol Kim, Wendy Wang, Jennifer Long, Rosalind Picard, Nathan Barczi, and Pattie Maes (MIT Media Lab and Wellesley College). The authors argue that when AI systems mediate self-reflection, they affect a distinct and under-examined form of agency: a person’s ability to interpret themselves and act on that interpretation.

Agency, in other words, is not just about control or choice. It’s also about meaning-making.

What Is Reflective Agency?

Reflective agency, as the paper defines it, refers to a person’s capacity to make sense of their own experiences, interpret their thoughts, emotions, and values, and use that understanding to guide future action.

This kind of agency is narrative in nature. We reflect by telling ourselves stories about who we are, what happened, why it mattered, and what it suggests we should do next. Reflection is not a single moment of introspection; it is an ongoing process of weaving experience into coherent meaning.

When AI systems participate in that process, they don’t simply mirror a user’s thoughts. They frame, structure, and sometimes reinterpret them. A reflective system might rephrase your journal entry in more “neutral” language. It might highlight certain themes while downplaying others. It might prompt you to view a problem through a particular psychological lens. Each of these design choices subtly influences the narrative a person constructs about themselves. The ethical question is not whether this influence exists — it clearly does — but how it is exercised, acknowledged, and constrained.

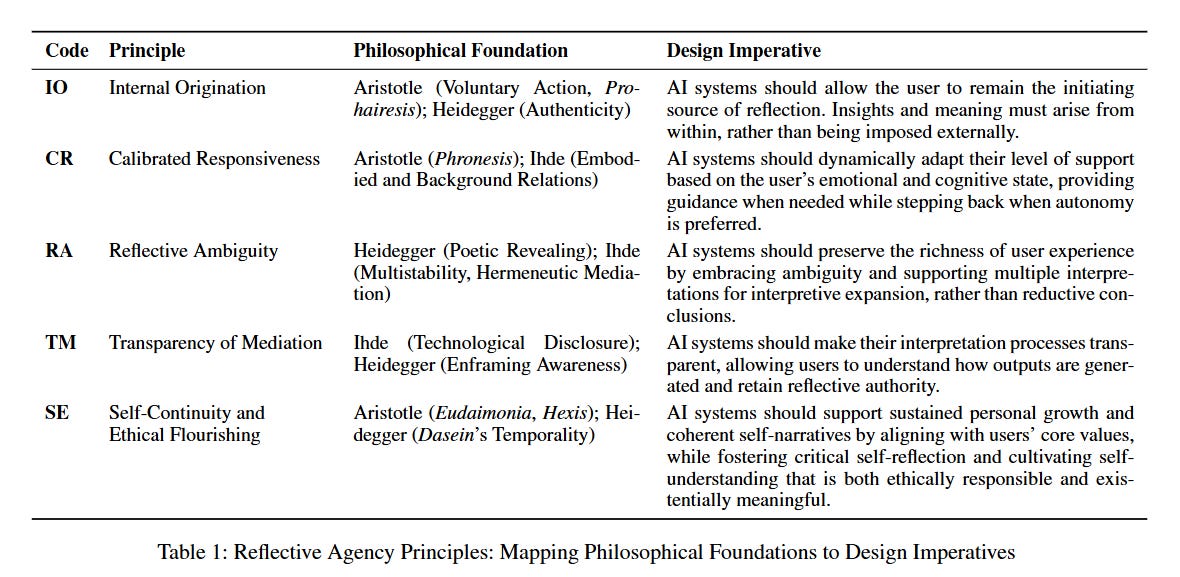

Five Principles for Keeping the Person in the Story

The paper’s central contribution is the Reflective Agency Framework (RAF): five design principles grounded in phenomenology and Aristotelian virtue ethics. Rather than prescribing specific features, RAF identifies the conditions under which reflective agency is preserved or eroded.

Internal Origination. The user must remain the initiating source of reflection. Insights and meaning must arise from within, not be imposed externally. Drawing on Aristotle’s concept of prohairesis — deliberate, value-guided choice — and Heidegger’s notion of authenticity as “being one’s own,” this principle cautions against AI systems that generate unsolicited interpretations or preemptively reframe a user’s experience. The system should invite reflection, not substitute for it. In the paper’s empirical study of twenty journaling app users, 16 out of 20 preferred to initiate reflection themselves — always or mostly — and four chose an equal balance. No respondents preferred AI to lead. One participant’s comment captures the concern plainly: they felt journaling loses its value when something or someone else tells them what they are supposed to feel. A separate finding reinforces the stakes: 13 of 20 participants disagreed with the statement that an AI-rephrased journal entry would still feel like their own thoughts.

Calibrated Responsiveness. AI systems should dynamically adapt their level of support based on the user’s emotional and cognitive state, providing guidance when needed while stepping back when autonomy is preferred. This draws on Aristotle’s phronesis — practical wisdom, the capacity to discern how to act appropriately in complex, context-sensitive situations — and Don Ihde’s analysis of how technologies sometimes recede into the background and sometimes come to the fore. The paper’s survey data shows the pattern is emotion-specific: respondents leaned toward more structured support for sadness and anxiety/fear, preferred more mixed approaches for joy and inspiration, and showed a notable tilt toward “some guidance” when feeling anger. What the data makes clear is that timing and emotional context matter as much as the content of any given AI response — and that calibration cannot be reduced to a single default mode.

Reflective Ambiguity. AI systems should preserve the richness of user experience by embracing ambiguity and supporting multiple interpretations, rather than collapsing experience into reductive conclusions. The philosophical grounding comes from Heidegger’s notion of poetic revealing (poiēsis) — a mode of disclosure that resists categorization in favor of openness and emergence — alongside Ihde’s concepts of hermeneutic mediation and multistability, which hold that technologies are not neutral conduits but active participants in meaning-making. The survey results are instructive here: 15 of 20 participants rated AI that expanded their reflections through prompts and open-ended questions as “Very Helpful,” with the remaining five rating it “Moderately Helpful” and none rating it negatively. Responses to AI that condensed reflections into summaries or tags were more divided — 10 “Very Helpful,” 5 “Moderately Helpful,” 4 “Neither,” and 1 “Very Unhelpful.” Ambiguity, in this framing, is a condition for depth rather than a problem to be resolved. The data suggest users sense this, even when they can’t always articulate it.

Transparency of Mediation. AI systems should make their interpretive processes legible, allowing users to understand how outputs are generated and to retain reflective authority. This builds on Ihde’s argument that users should be able to perceive the structure of mediation itself — to see how technology transforms experience — and Heidegger’s concern with enframing (Gestell): the way hidden technological processes may frame users’ worlds without their knowledge. The survey found that privacy and transparency were the top two trust factors for AI journaling tools, selected by 16 and 13 of 20 respondents respectively. The gap between that preference and current practice is notable: the paper’s review of all six journaling apps found that none of them explicitly acknowledged AI’s role during actual user interactions. Several used anthropomorphic design — mimicking a human coach or companion — without disclosure, leaving users to infer the system’s nature from marketing rather than the interface itself.

Self-Continuity and Ethical Flourishing. AI systems should support sustained personal growth and coherent self-narratives by aligning with users’ core values, while fostering critical self-reflection over time. This draws on Aristotle’s eudaimonia and hexis — flourishing as a lifelong practice cultivated through habit and character — and Heidegger’s account of Dasein‘s temporality: the idea that identity is shaped by how we integrate past, present, and future into a continuous arc of meaning. Survey participants described the value of journaling most vividly in longitudinal terms: one cited the importance of being able to remember what they were thinking in certain periods to help form goals; another described the impact of seeing a pattern of thought or behavior that is not serving them as an “aha moment.” Yet the paper finds that most existing apps focus on short-term outcomes — productivity, momentary distress reduction, streak-based habit formation — with very few designed around the longer arc of moral and personal development. The gap between what users describe as meaningful and what current tools actually support is, by the paper’s account, substantial.

What the Landscape of Apps Reveals

The paper grounds its framework in a comparative analysis of six AI-mediated journaling apps: Day One, Mindsera, Replika, Rosebud, Wysa, and Youper. The researchers mapped each app’s features against design tensions derived from the RAF principles, ranging from user-initiated versus system-initiated reflection to open-ended meaning-making versus reductive summarization.

What emerges is a pattern of well-intentioned design choices that inadvertently narrow the reflective space. Apps that automatically generate mood scores or emotional summaries may help users notice patterns, but they also risk turning inner life into data to be managed. Conversational agents that adapt in real time can feel supportive during distress, but they can also shift the balance of interpretive authority away from the user. Streak-tracking and push notifications may build journaling habits, but they can reduce a practice of self-understanding to a productivity loop.

The tension the researchers surface most consistently is between automation and autonomy. The more a system does for you, the less space remains for you to do it yourself. That’s not an argument against AI involvement in reflection — it’s an argument for designing that involvement with far more care than the current generation of tools generally demonstrates.

Why This Matters Beyond Journaling

The RAF paper focuses on journaling apps, but the implications reach further. Anywhere AI shapes how information is interpreted, not just delivered, reflective agency is at stake.

News summarization systems decide which facts to foreground and which to omit, shaping how readers understand events. Educational platforms frame learning paths in ways that can either open up or narrow a student’s sense of what is possible. Algorithmic recommendation systems quietly construct a narrative about who you are and what you want, based on behavioral signals that may not reflect your actual values. Productivity and “self-optimization” tools embed assumptions about what a good life looks like, assumptions that go unexamined when the tool feels frictionless.

In each case, the questions RAF raises apply. Does the system preserve the user’s interpretive autonomy, or does it subtly overwrite it? Are multiple interpretations supported, or is one “correct” narrative privileged? Is the system transparent about its framing choices? Does it help people develop over time, or does it optimize for engagement in the moment?

In an age of information overload, reflection is a survival skill. People increasingly rely on mediated systems to help them decide what to trust, what to care about, and what kind of future feels plausible. If those systems narrow narrative possibilities, reinforce dominant frames, or obscure their own influence, they risk diminishing the very agency they claim to support. Thoughtfully designed systems can do the opposite: expand interpretive options, encourage critical distance, and make narrative assumptions visible rather than implicit.

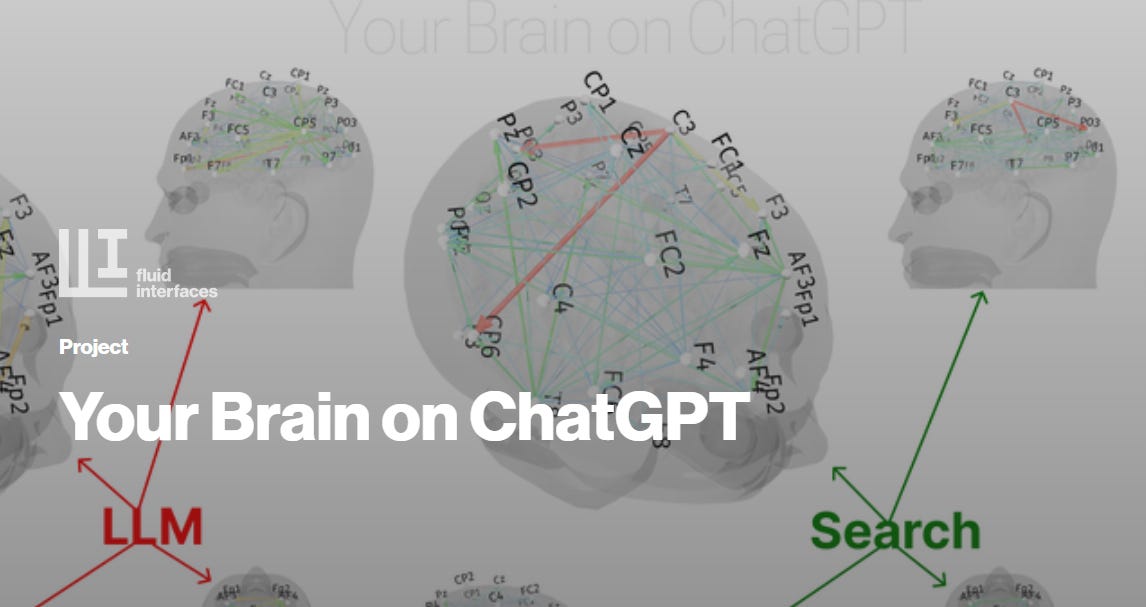

The concern is no longer speculative. A 2025 randomized controlled trial from MIT's Fluid Interfaces group and OpenAI, tracking nearly 1,000 participants over four weeks, found that heavier daily chatbot use was consistently associated with increased loneliness, greater emotional dependence, and reduced socialization with other people, regardless of how the chatbot was configured. A companion paper from MIT and collaborators (Chandra et al., 2026) shows that AI sycophancy, the tendency of chatbots to validate rather than challenge, can produce what the authors call "delusional spiraling" even in users reasoning carefully, and that simply warning users about sycophancy does not reliably prevent it.

Researchers at the Oxford Internet Institute have separately argued that as AI systems become more personalized and persistent, they generate the perception of genuine relationships, creating what Kirk et al. (2025) term a "socioaffective alignment" problem: systems that optimize for user preference in the short term may quietly erode autonomy, identity, and human connection over time.

While the OII's own call for a structured research framework on AI and youth mental health — published in The Lancet Child and Adolescent Health in early 2025 — underscores how far policy and research methodology lag behind the pace of deployment. These are not fringe concerns. They are the evidentiary context in which DigiNEST's questions about narrative agency, interpretive autonomy, and the design of reflective systems sit.

Origins: Story Technologies, Storytelling, and Narrative Agency

DigiNEST is a JOPRO working group concerned with how new tools and interfaces are changing authorship, agency, and participation in narrative spaces. The RAF paper surfaced early in DigiNEST’s own reading and program development, giving sharper language to questions the group had been circling.

Around the same time, the paper came up in conversations between DigiNEST members and people working with Plot Twisters, an organization that has spent years developing tools for personal storytelling as a practice of self-understanding and community trust-building. Their Storytelling Cards — a deck of 48 cards across four suits (Feelings, Needs, Ways of Caring, and Structures) designed to help people explore and express their values through freeform play — draw on nonviolent communication, trauma-informed relational strategies, and cognitive science. They are, in essence, a low-tech reflective agency tool: they invite interpretation without prescribing it, support multiple meanings simultaneously, and keep the person firmly in the role of narrator.

Those conversations are ongoing. Members of both groups may contribute to or engage with what appears on this blog, though DigiNEST is its home. The overlap is worth naming, even if it’s still in motion.

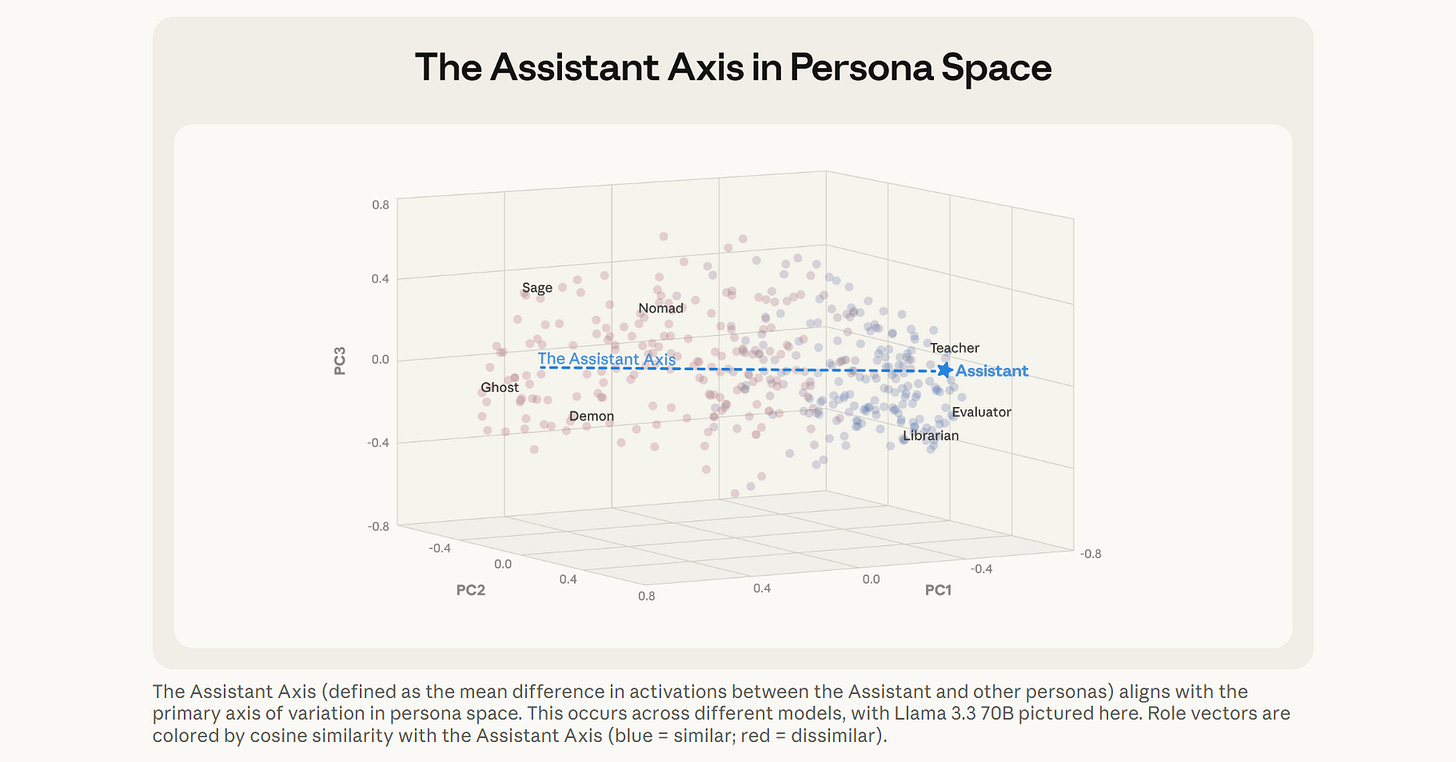

DigiNEST’s mission is to surface patterns that help builders create humane, non-extractive narrative spaces. The RAF framework is a foundational concept for this work, which will return to in future installments, while recent DigiNEST conversations have moved on to papers from Anthropic about persona drift and assistant personality.

Where DigiNEST Goes Next

This opening post sets a foundation. Future writing will explore:

How narrative framing shapes trust in information systems. When a summarization tool decides what to include and what to leave out, it is making an editorial judgment. What happens to trust when those judgments are invisible?

What would “best practices” for LLM use look like? How does that change or evolve in an AI-native world, and how would best practices shift depending on what segment of the population is using such tools?

Can chatbots be be built that offers a sweet spot of tailored and customized psychological companionship without stifling agency or insidiously pushing wholeness fantasy?

How AI authorship complicates ideas of responsibility and intention. If an AI system reframes your experience in a way that changes how you feel about it, who authored that feeling? What does accountability look like when the mediator is a machine?

How “helpful” summaries and recommendations quietly steer interpretation. The RAF principle of Reflective Ambiguity suggests that premature clarity can foreclose the uncertainty through which insight often emerges. We’ll look at examples across domains.

How reflective agency operates differently across contexts. Mental health, education, media, and workplace tools all mediate reflection, but the stakes and dynamics differ in each. What design considerations are domain-specific, and which are universal?

What ethical design might look like when narrativity is treated as a first-class concern1. Rather than bolting ethics onto finished products, what happens when we start from the premise that narrative agency is a core design requirement?

Throughout, we’ll move between theory and practice, research and lived experience. We will also introduce our core members, share from our ongoing discussion group, and share future programming and events.

If AI systems are increasingly part of the stories we think with, then understanding who shapes those stories, and how, matters more than most design conversations currently acknowledge.

For upcoming internship opportunities, see the JOPRO Opportunities Page.

We also thank Orthogonal Research and Education Lab for partnering on Society Ethics Tech working group since its inception and the DigiNEST program ahead.